Perceptually Optimised Sound Zones

Start date: 1 October 2010End date: 30 April 2014

Principal Investigators: Dr Philip Jackson and Dr Russell Mason

Co-Investigators: Dr Martin Dewhirst and Dr Chris Hummersone

Research Student: Khan Baykaner

Research Student: Philip Coleman

Research Student: Jon Francombe

Research Student: Marek Olik

Industrial Partner: Bang and Olufsen

Background

Often, two people in a single room want to listen to different items of audio. It may be that one person is watching television whilst the other is listening to the radio, or even that one plays a computer game whilst the other reads in silence. The obvious solution to this would be for all individuals to wear headphones, however this dramatically increases isolation (not just in acoustic terms), is impractical if more than one person wants to listen to either source, and could be uncomfortable over an extended period.

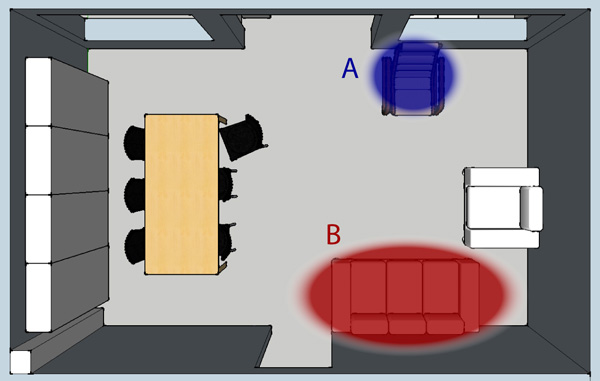

It would be great if we could create ‘zones’ of sound: areas within a room or other environment, where only one of the audio signals could be heard. In other words, reproducing sound in specific zones whilst minimising spill into other zones. An example is shown below of a living room containing two sound zones, A and B, with the remaining space being either a quiet area, or an area where the reproduced sound is relatively unimportant.

A number of previous studies have examined methods to control sound to create multiple zones. However, these have predominantly been created in anechoic (reflection-free) environments rather than rooms with walls that reflect sound, and performance has been judged exclusively by using level-based metrics such as signal to noise or signal to interferer ratios. Firstly, in order to be commercially useful, these systems need to work in real rooms such as living rooms, where sound is reflected from walls and furniture Secondly, the level-based metrics give some useful information in engineering terms, and can enable comparisons of methods. Unfortunately, they gives little indication of the perceived quality of the results: most importantly, how good will a consumer think it is?

This project is unique in the way that it combines engineering (to create the sound zones) and psychoacoustics (to evaluate and predict the perceived quality). It has been funded by Bang and Olufsen and the Engineering and Physical Sciences Research Council.

The engineering research is being conducted by staff and students from the Centre for Vision, Speech and Signal Processing, in collaboration with engineers from Bang and Olufsen. They have developed methods to create sound fields where the audio is concentrated on the corresponding sound zones, with minimal spill into other zones.

The psychoacoustic research is being conducted by staff and students from the Institute of Sound Recording, in collaboration with psychoacousticians from Bang and Olufsen. They have determined the most appropriate perceptual factors when listening interfering signals in a sound zone, and have developed models in order to predict the performance of a system in a perceptually-relevant way.

The video below includes interviews with a number of the project contributors, as well as a binaural demonstration of one of the resulting sound zone systems. The demonstration includes recordings made in two zones (denoted 'A' and 'B') in a single space. For each zone, when the system is 'off', two interfering signals are played: the 'A' signal from a loudspeaker in front of the 'A' zone and the 'B' signal from a loudspeaker in front of the 'B' zone. As would happen if two people wanted to listen to two audio devices in a living room (e.g. a TV and radio playing different things) both can be heard and the result is distracting. When the system is 'on', the sound from the interfering zone is suppressed within each sound zone, and only the wanted signal in each zone should be heard.

More details of the work so far can be found below.

Engineering:

- Supervisor: Dr Philip Jackson

- Philip Coleman

- Marek Olik

Sound zoning techniques have been shown to be able to create acoustic separation between different regions in the same space if there are no reflections from walls or other surfaces (an anechoic environment). In a typical room however, the performance is affected by reflections from the surfaces surrounding the zones. One of the aspects that the team have worked on is to try to control reflections, so that a sound zone system in a room can perform more similarly to that located in an anechoic chamber.

One way of improving performance in a room is to adjust the position of the loudspeakers, to make it easier to reduce the amount of sound emitted towards the most problematic reflections. However, this needs to be undertaken in a way that doesn't dramatically affect the control of the direct sound from the loudspeakers to the quiet zone.

Through analytical study of simple systems, the team have formulated design rules for loudspeaker layouts which optimise performance in this way. The results of such some of the initial studies were described in a conference paper [8]. Subsequent research has found that these initial conclusions are applicable to larger systems using numerical optimisation: in many cases it is possible to improve performance by adjusting the directivity pattern of the loudspeaker array to fit a specific geometrical relationship between the quiet zone and the reflective surface.

The team has also worked on implementation and analysis of sound zone algorithms from the literature, with the aim of optimising performance and robustness. Initially, these have been evaluated using a range of physical performance metrics, and have systematically examined optimisation methods such as contrast optimisation and least-squares optimisation, which had not previously been compared in the literature. It was found that the performance of each optimisation method was dependent on the specific cost functions employed. In addition, a range of methods of regularisation were examined. These results were described in a conference paper [7].

One difficulty in assessing the spatial properties of sound zone performance was the lack of a suitable metric for quantifying performance. Therefore, the team developed a new metric, planarity [9]. This is the ratio between the energy of the wanted plane wave arriving at a microphone array and the total energy arriving at the array. This was integrated into a novel 'planarity control' cost function, that achieved high contrast between the zones whilst also reducing troublesome interference patterns from the target zone [10]. The planarity control can also be used to create multiple virtual loudspeakers with differing positions, allowing the creation of 2-channel stereo or multichannel reproduction in each sound zone [16].

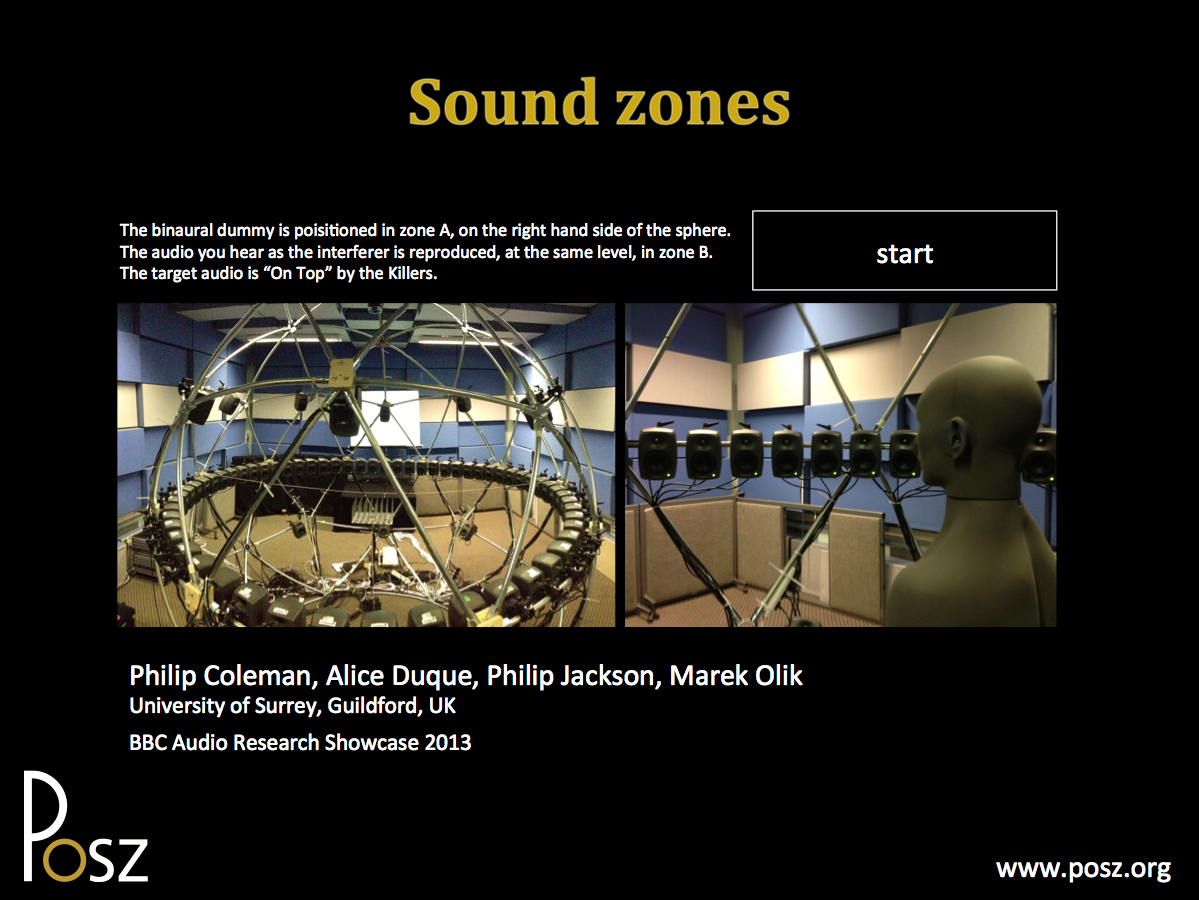

These results have been applied to create sound zone demonstrations using the Surrey Sound Sphere and using a range of systems at Bang and Olufsen's headquarters in Struer, Denmark. In addition, some of the results were demonstrated at the 52nd Audio Engineering Society International Conference at the University.

To demonstrate the sound zone system, we have made binaural recordings in one target zone, with three different methods: pressure matching, planarity control, and 2-channel stereo planarity control. Click the picture below to download a Powerpoint file containing these demonstrations.

Impulse responses of the system shown above (from 60 loudspeakers in a circular array to 864 microphones located in three zones within the circle) can be downloaded from the resources page.

Psychoacoustics:

- Supervisor: Dr Russell Mason

- Co-supervisors: Dr Martin Dewhirst and Dr Chris Hummersone

- Khan Baykaner

- Jon Francombe

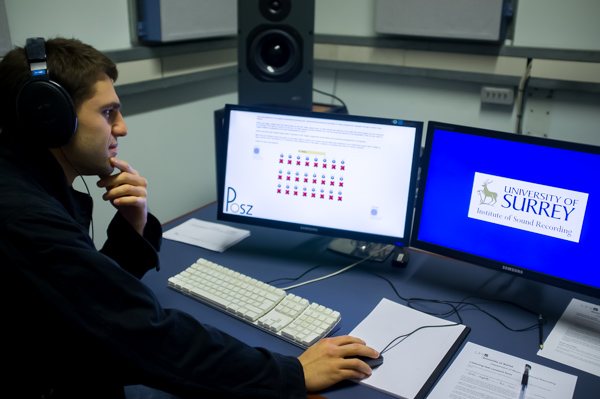

The first stage of the psychoacoustic work was to determine how people describe the situation where there is a target item of audio as well as an interfering audio item. An attribute elicitation experiment was undertaken which produced a large pool of descriptors. This was subsequently reduced to a small set of attributes by groups of listeners. Ratings were made using scales created from these attributes, and ‘distraction’ was selected as being most relevant to audio-on-audio interference situations and used most consistently between participants.

There are likely to be situations in which a sound zone system successfully manages to make the interfering sound inaudible below the target sound; in this situation the interferer has been masked. In order to help detect when this occurs, a physiologically inspired masking threshold prediction model was developed. The model was calibrated and the input windowing and output processing customised to allow for predicting the masking thresholds of representative stimuli (i.e. speech and music), with prediction accuracy within 3 dB RMSE, as reported in two conference papers [4,6]. Following this, the acceptability of these audio-on-audio interference scenarios was examined, and it was found that the the acceptability could be reasonably well predicted from the masking threshold model. As described in a conference paper [11], the predictions of this acceptability model account for 76% of the variance in the acceptability data.

Work has also been undertaken to attempt to predict the perceived distraction of audio-on-audio interference situations, using a statistical model that combines physical parameters measured in a sound zone system. By making measurements of a real or simulated system, predictions can be made about how distracting the resulting sound is, giving an answer in a form that is meaningful to the end-user. Such a predictor will be used to optimise sound zone systems, and provide feedback to users or system designers. Initial results have indicated that a model can be successfully created, but that it needs more training to be widely applicable [5].

The data gathered so far implied that differences in acceptability and distraction occurred due to the presence or absence of speech in the target programme, thus targets comprising speech were investigated separately, with a focus on perceived intelligibility. A transcription experiment was conducted to gather intelligibility data for interference scenarios. Whether the intelligibility of interfering speech can help explain the acceptability of listening scenarios is currently under investigation, and will contribute to a more detailed understanding of the quality of the listening experience within sound zones.

Combined:

There are many ways in which the combination of the engineering and psychoacoustic work has aided the development of the project. Firstly, the use of listening tests, both formal and informal, have helped to appropriately direct the efforts of the engineering work to make the most perceptually salient advances [2]. They have also been used to evaluate the most appropriate methods for sound zone control [13].

Now that preliminary psychoacoustic prediction models have been developed, they can be applied to the further optimisation of sound zone reproduction systems. For instance, one challenge is to reduce the number of loudspeakers employed for a sound zone system so that it will be practical for domestic use. To achieve this, the perceptual model was included in an optimisation cost function, and it was found that this improved the perceived results compared to a conventional engineering cost function [12].

In order to train the models further, over 200 items of programme material were chosen using a random radio selection method [15]. Subjective experiments were undertaken using these stimuli in order to generate a large dataset of subjective results, such as those described in [14]. These have been used to further train models to predict perceived distraction and acceptability for a range of stimuli.

As the psychoacoustic work develops further, the resulting prediction models will be of more use for optimising the sound zone systems. In addition, a real-time implementation could be used for modifying the programme material to further improve the sound zone performance.

Publications

- [1] Coleman, P., Moller, M., Olsen, M., Olik, M., Jackson, P.J.B., Pedersen, J.A., 2012: 'Performance of optimized sound field control techniques in simulated and real acoustic environments', Journal of the Acoustical Society of America, vol. 131, no. 4, pp. 3465.

- [2] Francombe, J., Mason, R., Dewhirst, M. and Bech, S. 2012: 'Determining the threshold of acceptability for an interfering audio programme', 132nd Audio Engineering Society Convention, preprint 8639.

- [3] Francombe, J., Coleman, P., Baykaner, K., Olik, M., Mason, R., Jackson, P.J.B, Dewhirst, M., Hummersone, C., Bech, S. and Pedersen, J.A. 2013: 'Look, no headphones! Multiple listening regions in the same room', University of Surrey Postgraduate Research Conference.

- [4] Baykaner, K., Hummersone, C., Mason., R. and Bech, S. 2013: 'Selection of temporal windows for the computational prediction of masking thresholds', IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Vancouver.

- [5] Francombe, J., Mason, R., Dewhirst, M. and Bech, S. 2013: 'Modelling listener distraction resulting from audio-on-audio interference', 21st International Congress on Acoustics / 165th Meeting of the Acoustical Society of America, Journal of the Acoustical Society of America, vol. 133, p. 3367.

- [6] Baykaner, K., Hummersone, C., Mason, R. and Bech, S. 2013: 'The computational prediction of masking thresholds for ecologically valid interference scenarios', 21st International Congress on Acoustics / 165th Meeting of the Acoustical Society of America, Journal of the Acoustical Society of America, vol. 133, p. 3426.

- [7] Coleman, P., Jackson, P. J., Olik, M., Olsen, M., Moller, M. and Abildgaard Pedersen, J. 2013: 'The influence of regularization on anechoic performance and robustness of sound zone methods', 21st International Congress on Acoustics / 165th Meeting of the Acoustical Society of America, Journal of the Acoustical Society of America, vol. 133, p. 3344.

- [8] Olik, M., Jackson, P. J. and Coleman, P. 2013: 'Influence of low-order room reflections on sound zone system performance', 21st International Congress on Acoustics / 165th Meeting of the Acoustical Society of America, Journal of the Acoustical Society of America, vol. 133, p. 3349.

- [9] Jackson, P. J., Jacobsen, F., Coleman, P. and Abildgaard Pedersen, J. 2013: 'Sound field planarity characterized by superdirective beamforming', 21st International Congress on Acoustics / 165th Meeting of the Acoustical Society of America, Journal of the Acoustical Society of America, vol. 133, p. 3344.

- [10] Coleman, P., Jackson, P.J.B., Olik, M. and Pedersen, J.A. 2013: 'Optimizing the planarity of sound zones', 52nd Audio Engineering Society International Conference on Sound Field Control, Guildford, UK.

- [11] Baykaner, K., Hummersone, C., Mason, R. and Bech, S. 2013: 'The prediction of the acceptability of auditory interference based on audibility', 52nd Audio Engineering Society International Conference on Sound Field Control, Guildford, UK.

- [12] Francombe, J., Colemane, P., Olik, M., Baykaner, K., Jackson, P.J.B., Mason, R., Dewhirst, M., Bech, S. and Pedersen, J.A. 2013: 'Perceptually optimized loudspeaker selection for the creation of personal sound zones', 52nd Audio Engineering Society International Conference on Sound Field Control, Guildford, UK.

- [13] Olik, M., Francombe, J., Jackson, P.J.B., Coleman, P, Olsen, M., Moller, M., Mason, R. and Bech, S. 2013: 'A comparative performance study of sound zoning methods in a reflective environment', 52nd Audio Engineering Society International Conference on Sound Field Control, Guildford, UK.

- [14] Baykaner, K., Hummersone, C., Mason, R., and Bech, S. 2014: 'The acceptability of speech with interfering radio programme material', 136th Audio Engineering Society Convention, preprint 9020.

- [15] Francombe, J., Mason, R., Dewhirst, M., and Bech, S. 2014: 'Investigation of a random radio sampling method for selecting ecologically valid music programme material', 136th Audio Engineering Society Convention, preprint 9029.

- [16] Coleman, P., Jackson, P.J.B., Olik, M. and Pedersen, J.A. 2014: 'Stereophonic personal audio reproduction using planarity control optimization', Proceedings of the 21st International Congress on Sound and Vibration, Beijing.

- [17] Coleman, P., Jackson, P.J.B., Olik, M. and Pedersen, J.A. 2014: 'Numerical optimization of loudspeaker configuration for sound zone reproduction', Proceedings of the 21st International Congress on Sound and Vibration, Beijing.

- [18] Coleman, P., Jackson, P.J.B., Olik, M., Møller, M., Olsen, M. and Pedersen, J.A. 2014: 'Acoustic contrast, planarity and robustness of sound zone methods using a circular loudspeaker array', Journal of the Acoustical Society of America, vol. 135(4), pp. 1929-1940.

- [19] Coleman, P., and Jackson, P.J.B. 2014: 'Planarity panning for listener-centered spatial audio', Proceedings of 55th Audio Engineering Society International Conference on Spatial Audio, Helsinki.

- [20] Coleman, P., Jackson, P.J.B., Olik, M., and Pedersen, J.A. 2014: 'Personal audio with a planar bright zone', Journal of the Acoustical Society of America, vol. 136(4), pp. 1725-1735.

- [21] Francombe, J., Mason, R., Dewhirst, M., and Bech, S. 2015: 'Elicitation of attributes for the evaluation of audio-on-audio interference', Journal of the Acoustical Society of America, vol. 136(5), pp. 2630-2641.

- [22] Olik, M., Jackson, P.J.B., Coleman, P., and Pedersen, J.A. 2014: 'Optimum source geometry for sound zone reproduction with a single reflection', Journal of the Acoustical Society of America, vol. 136(6), pp. 3085-3096.

- [23] Baykaner, K., Francombe, J., Mason, R., Coleman, P., Olik, M., Jackson, P.J.B., and Bech, S. 2015: 'The relationship between target quality and interference in sound zones', Journal of the Audio Engineering Society, vol. 63(1/2), pp. 78-89.

- [24] Francombe, J., Mason, R., Dewhirst, M., and Bech, S. 2015: 'A model of distraction in an audio-on-audio interference situation with music program material', Journal of the Audio Engineering Society, vol. 63(1/2), pp. 63-77.

Theses

- Baykaner, Khan Richard. (2014) Predicting the Perceptual Acceptability of Auditory Interference for the Optimisation of Sound Zones. Doctoral thesis, University of Surrey (United Kingdom).

Full Text - Coleman, Philip. (2014) Loudspeaker Array Processing For Personal Sound Zone Reproduction. Doctoral thesis, University of Surrey (United Kingdom).

Full Text - Francombe, Jon. (2014) Perceptual Evaluation of Audio-On-Audio Interference in a Personal Sound Zone System. Doctoral thesis, University of Surrey (United Kingdom).

Full Text - Olik, Marek (2015) Personal sound zone reproduction with room reflections Doctoral thesis, University of Surrey.

Full Text